Surgeon general asks Congress to require warning labels for social media, like those on cigarettes

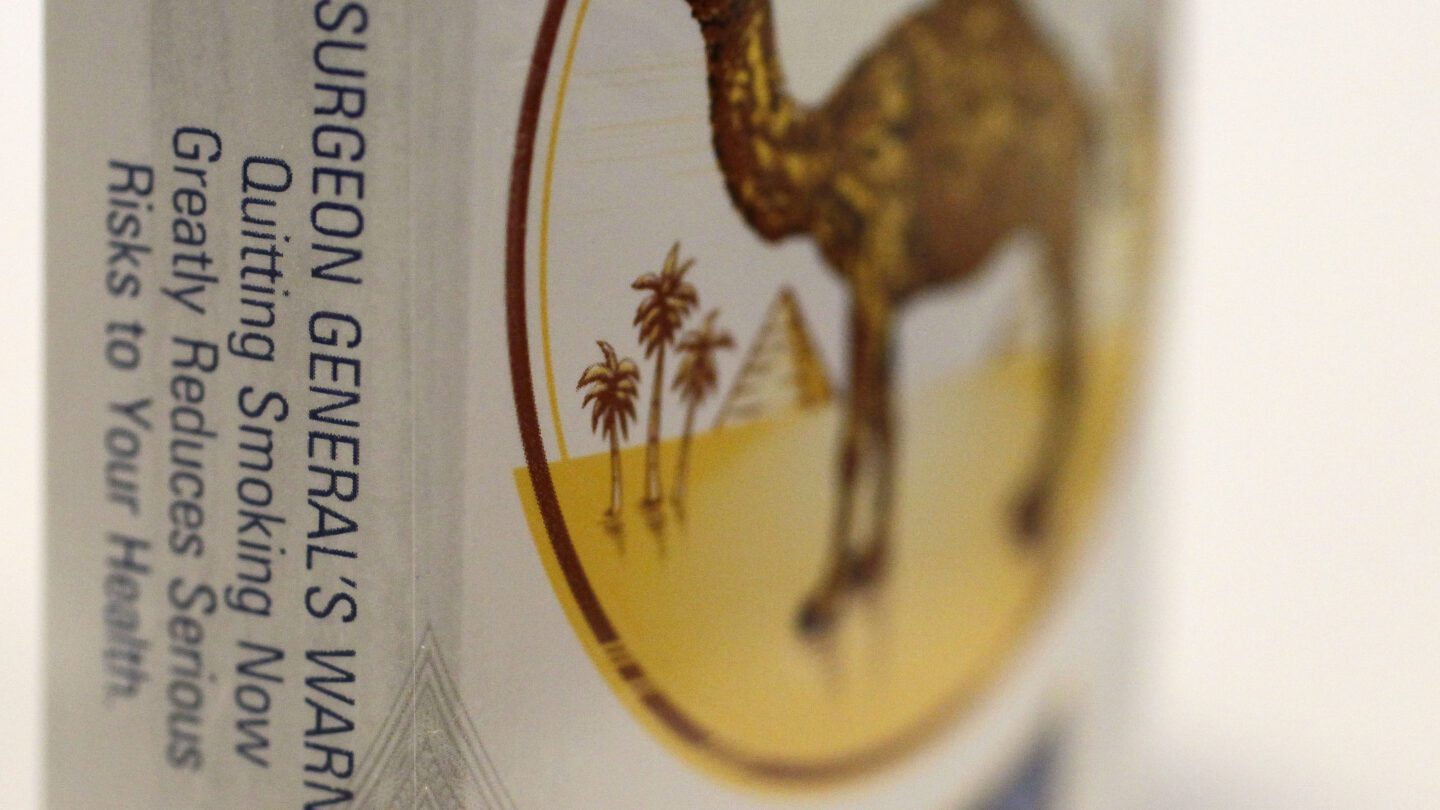

The U.S. surgeon general has called on Congress to require warning labels on social media platforms similar to those now mandatory on cigarette boxes.

In a Monday opinion piece in the The New York Times, Dr. Vivek Murthy said that social media is a contributing factor in the mental health crisis among young people.

“It is time to require a surgeon general’s warning label on social media platforms, stating that social media is associated with significant mental health harms for adolescents. A surgeon general’s warning label, which requires congressional action, would regularly remind parents and adolescents that social media has not been proved safe,” Murthy said. “Evidence from tobacco studies show that warning labels can increase awareness and change behavior.”

Murthy said that the use of just a warning label wouldn’t make social media safe for young people, but would be a part of the steps needed.

Social media use is prevalent among young people, with up to 95% of youth ages 13 to 17 saying that they use a social media platform, and more than a third saying that they use social media “almost constantly,” according to 2022 data from the Pew Research Center.

Last year Murthy warned that there wasn’t enough evidence to show that social media is safe for children and teens. He said at the time that policymakers needed to address the harms of social media the same way they regulate things like car seats, baby formula, medication and other products children use.

To comply with federal regulation, social media companies already ban kids under 13 from signing up for their platforms — but children have been shown to easily get around the bans, both with and without their parents’ consent.

Other measures social platforms have taken to address concerns about children’s mental health can also be easily circumvented. For instance, TikTok introduced a default 60-minute time limit for users under 18. But once the limit is reached, minors can simply enter a passcode to keep watching.

Murthy believes the impact of social media on young people should be a more pressing concern.

“Why is it that we have failed to respond to the harms of social media when they are no less urgent or widespread than those posed by unsafe cars, planes or food? These harms are not a failure of willpower and parenting; they are the consequence of unleashing powerful technology without adequate safety measures, transparency or accountability,” he wrote.

In January the CEOs of Meta, TikTok, X and other social media companies went before the Senate Judiciary Committee to testify as parents worry that they’re not doing enough to protect young people. The executives touted existing safety tools on their platforms and the work they’ve done with nonprofits and law enforcement to protect minors.

Murthy said Monday that Congress needs to implement legislation that will protect young people from online harassment, abuse and exploitation and from exposure to extreme violence and sexual content.

“The measures should prevent platforms from collecting sensitive data from children and should restrict the use of features like push notifications, autoplay and infinite scroll, which prey on developing brains and contribute to excessive use,” Murthy wrote.

The surgeon general is also recommending that companies be required to share all their data on health effects with independent scientists and the public, which they currently don’t do, and allow independent safety audits.

Murthy said schools and parents also need to participate in providing phone-free times and that doctors, nurses and other clinicians should help guide families toward safer practices.

While Murthy pushes for more to be done about social media in the United States, the European Union enacted groundbreaking new digital rules last year. The Digital Services Act is part of a suite of tech-focused regulations crafted by the 27-nation bloc — long a global leader in cracking down on tech giants.

The DSA is designed to keep users safe online and make it much harder to spread content that’s either illegal, like hate speech or child sexual abuse, or violates a platform’s terms of service. It also looks to protect citizens’ fundamental rights such as privacy and free speech.

Officials have warned tech companies that violations could bring fines worth up to 6% of their global revenue — which could amount to billions — or even a ban from the EU.